How AI policy in schools 2026 is shaping inclusive classrooms

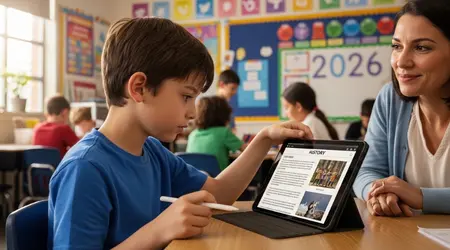

AI policy in schools 2026 is shaping inclusive classrooms in ways that began for me not in a boardroom, but in a quiet corner of a Year 4 classroom in Birmingham.

I was observing Leo, a ten-year-old with non-verbal autism, as he used a tablet to navigate a history lesson.

For years, Leo’s inclusion meant physical presence sitting in the room while the curriculum sailed over his head.

That afternoon, an adaptive interface translated his eye movements into sentence structures, allowing him to challenge a classmate’s timeline of the Industrial Revolution.

It was a moment of profound agency, yet it hung on a fragile thread of school regulation.

The Architecture of Modern Inclusion

- The Shift from Presence to Participation: Moving beyond physical integration toward genuine academic engagement.

- The Regulatory Guardrails: How new frameworks protect student privacy while fostering responsible innovation.

- The Literacy Gap: Why teacher training remains the essential engine of successful policy implementation.

- Socioeconomic Barriers: Addressing the risk of an “accessibility divide” in underfunded school districts.

- Future Projections: Anticipating the next five years of adaptive learning and cognitive support.

Why did we wait so long to regulate classroom intelligence?

The lag between technological capability and educational policy is a well-documented chasm.

For nearly a decade, assistive tools were often treated as “add-ons” expensive peripherals kept within special education departments.

What we are seeing now is a fundamental reversal. Policies drafted this year are finally treating artificial intelligence as baseline infrastructure.

By embedding accessibility into core procurement rules, schools ensure that software isn’t just “functional” for the average student, but adaptive for every learner.

There is a historical weight to the “medical model” of disability that rarely enters this debate. For decades, education systems viewed the student as someone to be “fixed” to fit the school.

Current frameworks suggest the opposite: the environment must be fluid enough to accommodate the individual.

When we look at how AI policy in schools 2026 is shaping inclusive classrooms, we see a departure from the rigid, one-size-fits-all digital textbooks of the past.

We are moving toward a living curriculum that adjusts its reading level, visual density, and sensory output in real-time.

++ Why teacher training for inclusive education still lags worldwide

How does the 2026 framework protect student data?

A central tension in any inclusive policy is the trade-off between personalization and privacy.

To work effectively, AI needs data it needs to understand how a student with dyslexia processes phonemes or how a student with ADHD responds to visual stimuli.

The 2026 guidelines have introduced “Privacy by Design for Neurodiversity.”

This ensures that the biometric and cognitive data collected to help a child learn isn’t being harvested by third-party vendors for profiling or future employability scoring.

Without these protections, inclusion could easily become a surveillance trap.

If a school records every cognitive struggle a child has to “personalize” their lesson, the ownership of that record becomes a significant ethical concern.

The current policy shift mandates that all adaptive learning data must be siloed and deleted after the academic year, unless parental consent is explicitly granted for longitudinal support.

It’s a delicate balance that aims to provide the benefits of high-tech intervention without the sting of permanent digital labeling.

What actually changed after the 2026 Policy Overhaul?

| Feature | Traditional Inclusion (Pre-2026) | AI-Driven Inclusion (2026 Standards) |

| Learning Plans | Static IEPs reviewed annually. | Dynamic Learning Profiles updated frequently. |

| Exam Support | Human scribes or readers often required. | Integrated voice-to-text and natural processing. |

| Software Access | Specialized “Special Needs” software silos. | Universal Design for Learning built into all apps. |

| Media Accessibility | Manual closed-captioning for video content. | Real-time, multi-modal translation and transcription. |

Who is truly benefiting from these technological guardrails?

There is a structural detail that is often overlooked: the student in the “middle.”

While AI policy is often marketed through the lens of specific disabilities, the impact is felt deeply by students with “invisible” challenges.

Think of the student with mild processing delays who usually fades into the background.

Under the new mandates, the software they use for basic algebra silently offers more scaffolding when it detects a pattern of hesitation. This isn’t just “help”; it is the invisible architecture of equity.

The pattern repeats across different demographics.

We see that AI policy in schools 2026 is shaping inclusive classrooms by forcing publishers to move away from static, inaccessible formats.

In the past, a screen reader navigating a poorly formatted PDF was a barrier for a visually impaired student.

Today’s policy requires “Liquid Content” data that can be reflowed into Braille, audio, or high-contrast text instantly.

The beneficiary isn’t just the student with a disability; it is the teacher who no longer spends hours manually adapting worksheets.

Also read: Africa’s Innovative Approaches to Inclusive Learning

Can technology bridge the socioeconomic divide in education?

There are valid reasons to question the optimism surrounding these shifts. In wealthy districts, the 2026 policy acts as a gateway to cutting-edge cognitive support.

In underfunded areas, the same policy can feel like an unfunded mandate.

If the law says every classroom must be “AI-inclusive” but provides no budget for hardware, we risk creating a new form of digital segregation.

Inclusion isn’t just a software update; it is a resource allocation problem that requires consistent public investment.

Historically, we have seen this with the introduction of high-speed internet. Those with access thrived while others fell behind.

The 2026 policy attempts to mitigate this by tying “Inclusion Grants” to federal funding.

To receive tech subsidies, schools must prove that tools are being used across the entire student body, not just in specific tracks.

This is an attempt to ensure that the “intelligence” being added to the classroom is a public good rather than a private luxury.

Read more: Gamification and Accessibility: Designing Play for Learning

Why is teacher “AI Literacy” the real bottleneck?

Imagine an experienced educator, skilled in child psychology, suddenly asked to interpret a dashboard suggesting a student has a “90% probability of a reading fatigue episode.”

The policy recognizes that the human element is irreplaceable. It mandates that AI should never make a final “placement” decision for a student.

The technology proposes; the teacher disposes. This keeps ethical responsibility in human hands, preventing a “black box” effect where an algorithm decides a child’s academic future.

The most successful classrooms are those where the teacher uses AI as a co-pilot.

By automating the rote tasks of accessibility like generating captions or simplifying complex text the teacher is freed to provide the emotional support and mentorship technology cannot replicate.

We are seeing that AI policy in schools 2026 is shaping inclusive classrooms primarily by returning the “human” to the center of the room.

When mechanical barriers are lowered by software, social connections can finally flourish.

Reflecting on the Human Horizon

The true measure of these policies won’t be found in the sophistication of the algorithms, but in the confidence of the children using them.

We are moving away from an era where “special needs” was a label that defined a child’s limits. Instead, we are entering a phase where technology is used to dissolve those limits before they take root.

It is a journey involving ethical questions and budget battles, but the direction is clear. Inclusion is no longer a charitable act; it is a functional requirement of a modern society.

As we watch how AI policy in schools 2026 is shaping inclusive classrooms, we are essentially watching the development of a more empathetic system one that understands a student’s potential is only as limited as the tools we provide.

Frequently Asked Questions

Is AI replacing special education teachers?

No. The policy states that AI is a tool to support educators. Its role is to handle time-consuming tasks like transcription and content adaptation, allowing teachers to focus on complex emotional and instructional support.

Will my child’s data be used to track them later in life?

Current 2026 regulations include strict data-siloing requirements. Schools are prohibited from sharing cognitive or behavioral data with third parties, including potential employers or universities.

How do schools afford this technology?

Many jurisdictions have introduced “Inclusion Tech Grants” for adaptive hardware. Additionally, the policy requires all standard educational software to have these features built-in, reducing the need for expensive “add-on” programs.

Does this technology make students “lazy”?

On the contrary, by removing physical and sensory barriers, students can engage with more difficult concepts.

A student who can finally access a book through audio or simplified text is working harder on the content rather than struggling with the medium.

What happens if the AI makes a mistake?

The 2026 framework mandates a “Human-in-the-Loop” requirement. All AI recommendations must be reviewed by a qualified educator, and parents have the right to contest any automated assessment.