Scene-Aware AR: From Identifying Obstacles to Understanding Context

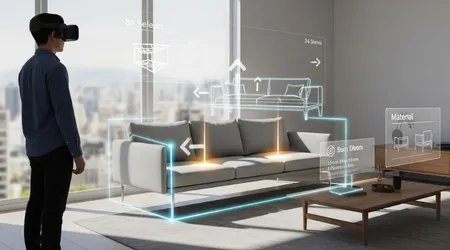

Scene-Aware AR represents the pivotal next generation of Augmented Reality, moving far beyond simple digital overlays and interactive games.

This technology endows devices with the cognitive ability to truly understand the physical world around the user.

It transforms AR from a visual effect into a crucial, intelligent layer of environmental comprehension.

For assistive technology, this shift is revolutionary. It means AR systems can transition from merely identifying basic obstacles to providing rich, contextual information necessary for complex tasks and real-time navigation.

This depth of understanding unlocks new levels of independence and accessibility.

What Defines the Evolution of AR to Scene-Aware AR?

The initial generation of AR focused primarily on spatial mapping, accurately placing virtual objects onto real surfaces.

While impressive, these systems lacked the ability to classify those surfaces. They could recognize a flat plane but not differentiate a floor from a table or a doorway.

Scene-Aware AR, leveraging advanced AI and computer vision, adds semantic segmentation to the mix. It means the system not only maps the geometry of the room but also labels every object within it.

The device truly “sees” the world, identifying objects like “chair,” “stairs,” and “fire extinguisher.”

++ Philips Hue and Smart Lighting Systems: A Tool for the Visually Impaired?

How Does Semantic Segmentation Enable Deeper Understanding?

Semantic segmentation allows the AR system to break down a visual scene pixel by pixel, assigning a category label to each.

This gives the device a comprehensive, labeled blueprint of the environment, mirroring human cognitive processing.

For instance, an AR headset can distinguish between an empty sidewalk and a busy road, recognizing the potential danger posed by the latter.

This classification capability is the fundamental basis for providing truly intelligent assistance to users with sensory or cognitive disabilities.

Also read: Why Haptic Alerts Are Replacing Audio Cues in Modern Design

Why is Real-Time Object Recognition Crucial for Navigation?

Real-time, low-latency object recognition is the operational heart of effective assistive AR.

A system must identify a hazard, such as a curb or a misplaced object, instantaneously to deliver timely warnings. Delay can render the assistance useless or even dangerous.

This necessity drives the integration of edge computing and specialized AI chips within modern AR glasses.

Processing data locally minimizes the time lag, ensuring immediate, accurate feedback necessary for safe, dynamic navigation in uncontrolled public spaces.

How Does Scene-Aware AR Benefit Individuals with Visual Impairments?

For individuals with low vision or blindness, Scene-Aware AR acts as a dynamic, intelligent visual interpreter, transforming environmental chaos into structured, actionable information.

It significantly enhances navigational safety and boosts situational awareness in unfamiliar environments.

The technology can deliver information via haptic feedback or spatial audio cues, allowing the user to experience the environment not through their eyes, but through a filtered, augmented stream of semantic data.

This turns the environment into a rich tapestry of labeled, identifiable points of interest.

Read more: IoT-Based Remote Monitoring: Keeping Vulnerable Users Safe at Home

What Role Does Contextual Labeling Play in Daily Life?

Contextual labeling goes beyond simply saying “chair.” It can read and interpret complex environmental text and signage.

An AR system can look at a wall and announce: “Emergency Exit, 5 meters forward on the right” or “The milk is on Shelf B, third item from the left.”

A user approaches a bus stop. The AR system, recognizing the bus schedule board, reads the next arrival time for a specified route and audibly announces the route number and expected delay.

This makes independent travel far more reliable and stress-free.

How Does the Technology Mitigate Common Household Hazards?

In the home, Scene-Aware AR can drastically reduce the risk of accidents. It can identify the state of appliances, notifying a user that the oven is still hot or that the stove burner has been left on. This offers crucial, persistent safety monitoring.

It can also provide proactive warnings about misplaced items, recognizing a chair pulled slightly out from a table or a cord lying across a walkway.

This intelligent hazard detection, based on understanding normal room configurations, prevents trips and falls.

What are the Ethical and Data Challenges of Semantic AR?

The power of Scene-Aware AR to collect and interpret vast amounts of highly personal environmental data introduces serious ethical questions regarding privacy and data security.

The device is, effectively, recording and cataloging the user’s entire physical world.

Companies developing these technologies must commit to stringent, transparent data governance models, ensuring that sensitive environmental scans and location data are processed locally and never stored without explicit user consent.

Trust is paramount for widespread adoption.

How Can We Ensure Data Privacy in Real-Time Mapping?

To protect privacy, developers must utilize “federated learning,” training the AI model across many devices without the central cloud server ever accessing the raw, sensitive environmental data.

The processed map data should be strictly anonymized and localized.

Furthermore, users must have granular control over what specific data streams are collected and shared.

They need a simple, clear mechanism to toggle off features that involve external data transmission when in sensitive or private environments.

Why Must Assistive Technology Avoid Algorithmic Bias?

The semantic labels and object recognition models must be trained on diverse, inclusive datasets to avoid algorithmic bias.

If the AI is trained predominantly on one type of architecture or specific consumer products, it may fail to accurately assist users in different cultural or socioeconomic settings.

An AR system trained only on modern Western kitchens might struggle to identify traditional cooking implements in a different region.

This failure in recognition means the assistive technology fails to provide equitable support for all user groups.

How Will Scene-Aware AR Evolve to Provide Cognitive Assistance?

The next frontier for Scene-Aware AR is providing assistance not just for physical navigation, but for cognitive function.

This involves recognizing the context of a task and guiding the user through multi-step processes, which is transformative for individuals with cognitive disabilities like dementia or severe ADHD.

This transition involves predicting user intent based on the environmental cues.

The system moves from merely labeling objects to understanding how those objects interact within a procedural workflow. The AR device becomes a cognitive coach.

How Does AR Provide Procedural Guidance?

For tasks like cooking or assembling furniture, the AR system can overlay step-by-step instructions directly onto the physical environment.

The system confirms each step before proceeding, minimizing errors and frustration.

For instance, the device recognizes a bag of flour on the counter and audibly prompts, “Now add two cups of flour,” then visually highlights the measuring cup.

This procedural scaffolding allows users to tackle tasks that were previously too complex.

What is the Promise of Scene-Aware AR for Education?

Scene-Aware AR holds enormous potential in specialized education, particularly for hands-on, vocational training.

The technology can overlay dynamic schematics or safety warnings directly onto machinery, making abstract concepts concrete and immediate.

| AR Technology Level | Primary Capability | Assistive Functionality | Commercial Adoption Status (2025) |

| Basic Overlay AR | 2D Image Tracking | Simple visual pointers (e.g., location pins). | High (Mobile Apps) |

| Spatial Mapping AR | 3D Geometry and Surface Anchoring | Object placement, basic navigation. | Medium (Initial Headsets) |

| Scene-Aware AR | Semantic Segmentation, Object Classification | Contextual safety warnings, Real-time labeling, Procedural guidance. | Emerging/Rapid Growth |

The global market for assistive technology, heavily influenced by AR advancements, is projected to grow by over 15% CAGR between 2025 and 2030 (Source: MarketsandMarkets Global Report, 2024 projections), highlighting the massive investment in this innovative sector.

Conclusion: The Horizon of Independent Living

Scene-Aware AR is swiftly shifting the paradigm in assistive technology, transforming devices from passive display screens into active, intelligent environmental partners.

By enabling deep semantic comprehension of the world, this technology promises profound gains in independence, safety, and cognitive support for millions.

The future of accessibility is not simply about removing barriers; it is about providing the precise, contextual information needed to navigate complex realities confidently.

The challenge now is to ensure this powerful technology is developed ethically and is made universally affordable.

What are your hopes for the next assistive AR application? Share your thoughts in the comments.

Frequently Asked Questions

How does Scene-Aware AR help deaf or hard-of-hearing users?

Scene-Aware AR can provide immediate, real-time visual translations of sound events.

For example, it can recognize a fire alarm and display a prominent text warning in the user’s field of view, or it can display a text transcription of a conversation partner’s speech.

Is Scene-Aware AR currently available in consumer devices?

While full, real-time semantic segmentation across complex scenes is still emerging, the underlying technology (like basic object recognition and spatial anchors) is already integrated into modern smartphones and advanced AR/VR headsets.

Dedicated assistive devices are rapidly incorporating these advanced features in 2025.

What is the difference between AR and Scene-Aware AR?

Standard AR primarily focuses on mixing virtual and real objects based on spatial location (e.g., placing a virtual couch in your living room).

Scene-Aware AR adds intelligence by recognizing what those real objects are (e.g., this is a “couch” and that is a “wall”), allowing for contextual interactions.

How long does it take for a Scene-Aware AR system to “learn” a new room?

With current technology, the initial mapping and semantic labeling of a new, moderately complex room (like a living room) can be performed rapidly, often in just a few seconds to a minute, depending on the computing power of the device and the complexity of the scene.

What is the biggest hurdle for widespread adoption in assistive tech?

The biggest hurdle is cost and accessibility. Advanced AR glasses with the necessary processing power are currently expensive.

Widespread adoption requires the technology to become affordable and robust enough to handle the variability of real-world use without constant reliance on high-speed internet.